Business should not stand still. To survive, he needs to constantly develop. Without new ideas, any project begins to degrade. Expanding the range, increasing the reach of an advertising campaign, redesigning the site, adding content that increases conversion – how to understand what benefits these changes will bring?

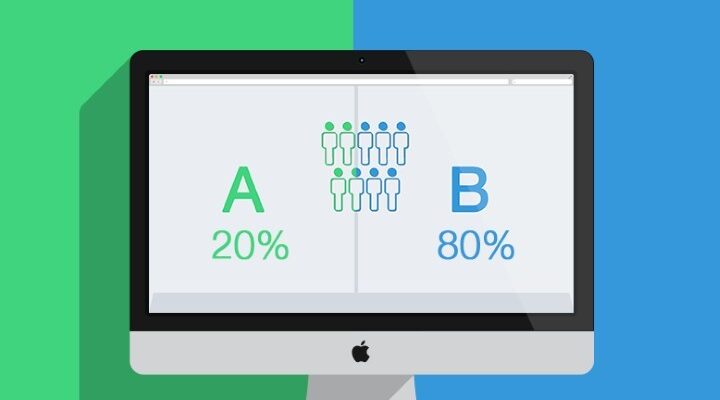

One of the tools for making an informed decision is A / B testing in contextual advertising. It allows you to test hypotheses and analyze consumer preferences in practice.

What is A/B testing in contextual advertising?

A/B testing, often referred to as split testing, is used as one of the marketing research methods. It is used to compare and determine the best elements that increase targets. An example is the task of finding such a variation of the ad title that will bring more clicks than other variations.

How many calls and sales will I get by ordering contextual advertising from you?

I need to calculate the conversion of my website Describe

the task

in the application

Calculate potential ad revenue Google

contextual advertising calculator

In relation to contextual advertising, A/B testing is used to analyze texts, images, and ad headlines. The specialist conducting the experiment compares the basic version of the settings and the experimental one.

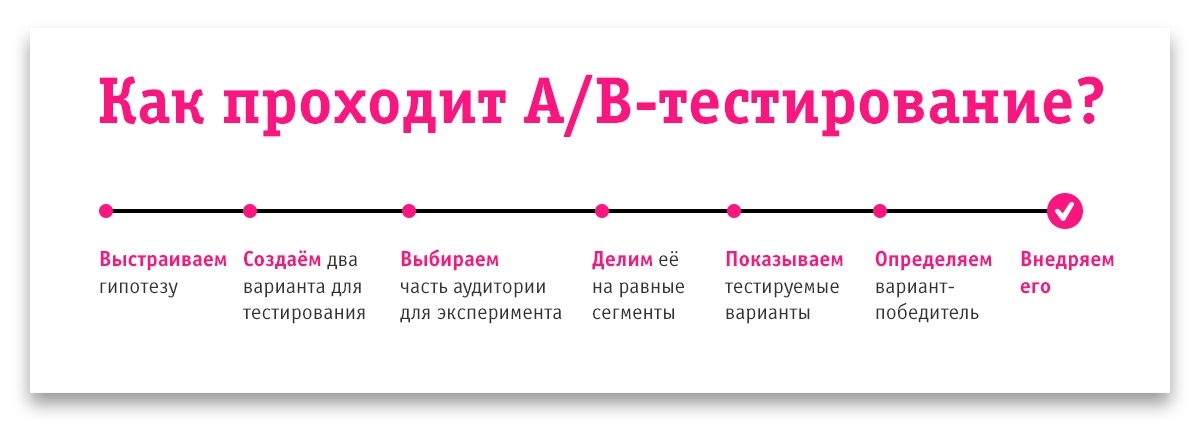

Before the testing process begins, a hypothesis is formulated. For example, if you add an urgency effect to your call to action, your ad’s CTR will improve.

After making the appropriate changes – instead of “Buy Online”, indicating “Buy Now”, the specialist sets up impressions for both the basic option and the experimental one. After the end of the test period, the effectiveness of each ad is analyzed and the best one is selected.

Advice! A/B tests should be done regularly. There are no perfect ads and optimal landing pages, so you need to constantly look for ways to increase the effectiveness of advertising investments, formulate and test hypotheses, and test changes.

Why split tests?

Almost any “perfect ad” can be improved, because there is no limit to perfection. Conducting split tests in contextual advertising helps to increase performance indicators.

How many calls and sales will I get by ordering contextual advertising from you?

I need to calculate the conversion of my website Describe

the task

in the application

Calculate potential ad revenue Google

contextual advertising calculator

Information! If you make changes that improve the ad even by a few percent, how will it affect business performance? The number of applications will increase, the same will happen with revenue and profit, but the cost of advertising will not change.

Another aspect is burnout, when the advertising message stops hooking the audience. Of course, you can go with the flow and not make changes to what “already brings applications”. But, this is the wrong approach. You should constantly work on improvements.

How to A/B test ads?

A/B testing of an ad can include any of its elements: call to action, image, text, title, link:

- Text and title. This step is usually the first. You can formulate and analyze various calls to action, or compare the effectiveness of ads with and without price information;

- Additional links. You can add links to promotions, shipping terms, or product category pages to your ad. And then check how it will affect the CTR;

- Landing page. You can try to change the color and size of the buttons on the site, or test different options for positioning the product image on the page;

- The link to display. It is important to remember that the landing page address may not match the displayed link, which helps convey information about what the visitor will see when clicking on the ad. Therefore, you can test different options for the displayed link and see which one works best;

- Ad clarifications. This useful Google Ads tool should not be overlooked. Here, you can place competitive advantages that are not included in the main text or heading of the ad;

- Photos and images. If the text fields of the ad have character limits, then when working with graphic banners, there is real scope for imagination. You can test a wide variety of options: from the color of the call-to-action button to the use of various visual content. It is likely that the green button will show a better conversion than the red one, but the hypothesis should be tested;

- Near-thematic key phrases. In this way, you can increase the number of clients from the context. By building a report on the interests of the audience in Google Analytics, you can roughly understand what the “circumvention” looks like for your business.

Features of split testing in contextual advertising

- Problem in comparing different testing intervals. It arises due to the heterogeneity of demand for a particular product at different time intervals. Testing can be considered reliable if the time intervals are chosen in such a way that the level of demand in them for each tested ad is approximately the same. Salvation in a situation with seasonality is the “chess” testing method;

- Need to experiment with not the entire ad group as a whole, but separately for each ad. This is required for a clear understanding of which particular element of the advertisement influenced its effectiveness;

- The duration of the experiment. The long duration of testing is associated with the instability of demand for most products. It may depend on weather conditions, exchange rates, etc. With this in mind, A / B testing should be carried out until a clear “leader” is determined. This often takes several weeks;

- Heterogeneity of the target audience. This factor often increases the period of obtaining an adequate result of the experiment. Due to the fact that each target group is active unevenly at different time intervals, it becomes necessary to increase the duration of the experiment to analyze all segments;

- Issues related to the analysis of low-frequency queries. This is the most difficult split testing problem in contextual advertising. To analyze relevant information, it is necessary that ads get an adequate (at least 100) number of clicks. But what to do when there are only 5-10 impressions per week on demand? Unfortunately, there is nothing left but to increase the testing period to several months. Of course, conclusions can be drawn based on a small amount of data. But such results may not be true.

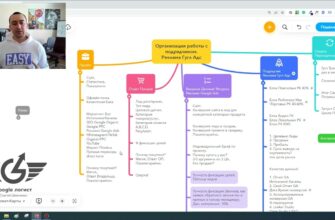

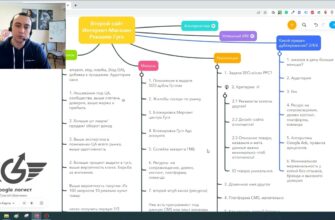

How to create A/B tests in Google Ads?

To conduct A/B testing in Google Ads you need:

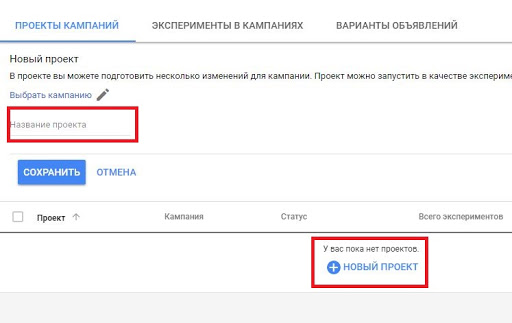

- Click on the “plus” in the right sidebar of the “Projects and Experiments” tab in the advertising account;

- Select the required campaign from the list and name the project based on the hypothesis you plan to test. It should not match the names of other campaigns and tests. Next, save the project;

- Change the campaign settings in the new project window so that the hypothesis conditions are met and apply the changes. Then you need to select “Perform experiment” in the new window and click “Apply”;

- Determine the time limits for testing, set the proportion of traffic required for the experiment, and save.

The experiment has started. It remains only to wait for the moment when enough data on its implementation will be collected in order to draw conclusions. The results are displayed in the Campaign Experiments section. After testing is over, you will be able to draw conclusions about the effectiveness of the campaign. In case of a positive result, you can start an experimental campaign by clicking on “Apply”. The system will offer a choice: adjust the original campaign or save the experiment as a new AC.

Tips for Qualitative Testing

- To get adequate results in each experiment, you should test exactly one ad block – only the image, only the text, etc.;

- Testing should not be launched when seasonality factors have a significant impact on demand. Otherwise, the results obtained may be biased;

- Set experiment times so that each ad can get at least 100 clicks. Insufficient amount of data may cause unrepresentative information received;

- A/B testing results are only applicable to a specific product at a given time interval;

- Test periods must include the same days of the week and times. This will avoid the unreliability of experiments.